Briefing Your Board on Cybersecurity part 3/3: Board Committees - Metrics and Materials

Cybersecurity is arguably the most concerning topic across corporate board rooms these days, with Directors clamoring for data not just on risk, but on general education around this new and complex subject. This collection of tips mirrors a talk I gave at the FS-ISAC annual summit in April 2018 and I hope follow CISOs, security practitioners, corporate governance professionals and corporate Directors may find it helpful.

Episode 1 introduced core Corporate Governance concepts for Security Professionals, and Episode 2 discussed some strategies and materials that are appropriate for briefing a full Board session. Here in Episode 3 we will talk about focused Board Committee meetings.

Board Committee Meetings

To recap Episode 1, full Board briefings on cybersecurity will often occur annually and be focused on subject matter education. Committee sessions, however, are usually where a formal governance remit over cybersecurity is held. Cybersecurity may be addressed in the Risk Committee, Technology Committee, Audit Committee, or even a dedicated Cybersecurity Committee. These Committees usually meet quarterly and are where assurance will be sought around cyber risk mitigation. It is in these sessions where we, as presenters, often feel pressed to deliver our message via metrics. This expectation is a natural consequence of the type of data presented to Board committees outside of cybersecurity. Financial data is delivered very effectively via charts and graphs. Operational data such as capacity and availability work perfectly into trend lines. Compensation data benefits greatly from salary bands, histograms, and outlier identification. With virtually every topic outside cybersecurity fitting a graphical metric delivery so well, it is no wonder we set that expectation when we discuss cybersecurity risk. That said, it can be extremely challenging to find visualizations and data sets that deliver complete, accurate, and compelling messages about a cybersecurity program. Reasons for this include volumetric correlation. Data lends itself to charts and graphs when extreme absolute values or relative differences correlate with an important message. As examples you can consider the number of subscribers to an online service, the scrap rate at a manufacturing plant, or the percentage of capacity utilized at an energy plant. Those data immediately speak to you in visual format, and the components that catch your eye have a story to tell and drive useful questions. With cybersecurity, however, volume often does not correlate with importance. The graphs of “packets dropped by the firewall” or “malware blocked in email” are classic examples. When reviewing peaks and troughs in those graphs the stories usually gravitate to new visibility gained via the introduction of a new tool, events dissapating when a stronger control is introduced upline, or untargeted internet attack noise that is not reflective of any change in the organization’s risk posture. Further, cybersecurity in the absense of an event is all about proving a negative, and by nature any data reported will consist only of the events that were known and mitigated. Finally, when it comes to raw vulnerabilities or system patch-levels, quantitative reporting ignores critical context such as network segmentation, exposure, and mitigating controls. 2000 unpatched devices with no Internet connectivity are not near as exciting as 10 devices connected directly to the Internet with no traffic filtering. There is significant risk of delivering an inaccurate message if high and low points on a graph don’t actually correspond to areas of interest. While I’ll share some ideas where I believe a limited set of metrics may overcome these challenges, a primary takeaway should be that you should not feel limited to hard metrics and should make appropriate use of narrative content or qualitative lists where they are appropriate.

TIP: Metrics are difficult in cybersecurity for good reasons. Don’t try to force them!

Setting aside our pre-occupation with metrics, let’s discuss some of those narrative or qualitative items that make good use of Committee time:

Approvals

Evaluating major cybersecurity decisions and memorializing formal approval is an important role for Board Committees. So which decisions or documents should the Committee vote on? Does the Board approve the security budget? What about strategy, policies, or procedures?

Budget is an area that gets a lot of cartoon-strip coverage when it comes to Board-level approval. In reality, though, CISOs are unlikely to be making a pitch for a new SIEM or datalake project in front of a Board Committee. A one-time corrective major uplift of the program may be an exception to the rule, but generally speaking security line-items will be analyzed, challenged, and refined within the CISO reporting line and be aggregated within a much larger package by the time they make it to the Board level. This approach doesn't mean spend-detail or benchmarking data cannot be made available to the Committee and challenged at any time - just that a formal approval step is not expected.

Like budget, approval of specific security policies - no less procedures - is usually impractical at the Board level. A security policy that is strategic enough to resonate with the Board likely does not contain the level of detail needed to regulate employee activity. Further, many firms maintain many sub-policies within security, and the resulting frequency of updates and technical content may be a poor fit for Directors.

It is most effective for a Board Committee to approve a security strategy and empower senior management to approve policy in accordance with that strategy. A strategy document should explain the process for determining priorities, define the importance of security to the company's mission, and explain the internal governance process that will approve policy changes. A strategy should also be forward-looking with a specific beginning and end timeline (consider 2 years) and the expectation of an update at the end of that cycle.

TIP: Approve a 2-year strategy at the Board; not policies or budget line-items.

By focusing Board-level approval on strategy, full energy can be devoted to approvals in a less frequent but more impactful manner.

A Window into Senior Management

With a strategy that empowers senior management to oversee cybersecurity policy and execution, Board Committees are well-served by material that allows them to see how those duties are being carried out. While quantitative metrics can be useful for operational measures, narrative descriptions can be more effective when you are trying to explain how risk assessment and decision making are being performed by company management. In addition to a consistently-written summary of major events and themes directly in Committee presentation material, capturing minutes of internal governance meetings as an appendix can be a highly effective method to allow the Board to witness internal interaction and even see overviews of the policy changes that are being approved. Documenting questions from subsidiary leadership and deliberation over implementation of a specific control can shed light on how seriously management is taking security and how much examination they are performing into the program.

Program Operation

While an exhaustive list of controls and technology can be a poor fit for Committee material, taking the time to highlight a specific tool, process, or initiative each quarter can be an effective way to demonstrate a healthy awareness of technology, trends, and capabilities while tying back to a specific commitment in the cybersecurity strategy.

Don't hesitate to cite even a lower-severity incident example that gives insight into how process and technology function. Walk through an attempted phishing email and controls that protected the environment. Tie that back to strategic objectives and the threat objective study that drove you to implement the controls initially. Directors will be contacted - directly or indirectly - by vendors claiming to have a miracle cure for security challenges. Consistently showing awareness of technology and a healthy cycle of evaluating and implementing innovative solutions will add weight to your opinions when asked about a specific product.

TIP: Use examples. Screenshots of actual phishing emails resonate!

Third-party Opinion

Board Committees are certainly the place to deliver third-party assessment results, though trying to walk through a penetration test will likely not be the most effective use of time. Stick to maturity assessments at this level that review your program design, coverage, and benchmarking against peers. Using the categories and maturity levels from a standard like the NIST Cybersecurity Framework can be a good way to ensure continuity as you change providers down the road.

Metrics

While cybersecurity metrics are challenging and do not need to constitute the bulk of your material, there are some messages that suit them well. Once you shift from looking for 10 metrics to a handful, you can be a lot pickier about quality. Set up a screening test for any metrics to decide if it will be worth presenting. Criteria to consider include:

- What data is required?

Metrics are built on data, and collecting that data can be a massive effort in itself. Are the data underpinning your metric readily available, or will you need to introduce new collection or visibility technology? Building a big data pipeline just for the sake of metrics is not only expensive, but it may point to a weakness in the value of the metric itself if you hadn't a reason to collect it before. Ask yourself if the efforts that go into collecting and analyzing data provide value to your mission before you launch.

- What stories can it tell?

Walk through your charts or graphs looking for non-obvious messages that you want to communicate. Seeing a chart of increased phishing email volume, for example, shouldn't just say "we are receiving more phishing emails". That is a simple and obvious data point that doesn't warrant a visual. Better stories include "more phishing attempts are proving successful in our environment due to the email adjustments we needed to make to on-board recent acquisition X" or "the average triage time to investigate suspicious email is around 22 hours due to the lack of overnight or weekend shifts." Stories should tie into existing material and support requests or put detail around processes you've described elsewhere.

- What questions does it invite?

Put yourself in a Director seat and imagine the questions you might ask around your metrics. Always try the big bookends of "Who cares?" and "Well then why didn't you fix it before you came in here?" If either of those catch you flat-footed you might want to reconsider your metric. Just patting yourself on the back will concern Directors that you are delivering the news through a strong filter. Likewise, just complaining about immaturity shows a lack of leadership or ability to get things done across the company - and those problems aren't solved by allocating more budget or approving the latest project, unfortunately for you. Good news is best tempered with caution, and bad news is best delivered with examples of collaboration and the efforts that are going into remediation. Don't throw your colleagues under the bus or point fingers, and get ahead of inevitable questions that may trip you into that.

- How sustainable is it?

Many of us have made the mistake of working weekends and late nights to stitch together a beautiful display of point-in-time data that tells a great story and invites all the right questions, only to have sentenced ourselves to quarter after quarter of repeating those heroic efforts against increasing competition for our time. As compelling as it may be, if you mock up a spellbinding visual with a lot of manual effort, consider it a prototype and keep it on the sideline until you can at least partially automate production.

Examples

Many of us have repeatedly searched the Internet for examples of cybersecurity metrics and been mystified by the dearth of material available. There is an overwhelming amount of encouragement to produce metrics and nebulous pontification about the goals, but few take the leap of actually showing some samples. If you respond to an audit or regulatory inquiry about metrics with a request for examples, don’t be surprised to conveniently hear “we’d love to show you but independence requirements dictate that we can’t advise and audit at the same time...” Given the challenges articulated it is understandable that many would be hesitant to share their imperfect constructions, but if we focus on the stories we are trying to convey and questions we would like to invite, we can identify ideas that may inspire useful instances in your environment.

- The risk register

We all struggle with reporting concrete or aggregate risks at the Board level. If we report vulnerability scan findings at the host level we may have hundreds of thousands of discrete items, most of which are profoundly uninteresting and/or correlated only with the deployment of new scanning technology. If we limit reporting to the highest level - Threat Objectives - it can be difficult to quantify a residual risk score and show trending over time.

Ways to avoid a deluge of uninteresting data include filtering to high and critical risks and focusing on remediation activity instead of vulnerabilities. Focusing on remediation activity forces you to incorporate triage and risk rating that is already going on before remediation tasks are agreed and assigned. If your vulnerability scans generate a lot of noise that are swept off the table when IT, operations, and/or developers weigh in, there is no need to just communicate that you have noisy tools and a lot of false positives. Further, looking at remediation activity can inherit some natural "bucketing" of vulnerabilities that are all addressed by the same mitigation tasks. If a big datacenter migration in Q3 is taking hundreds of legacy servers offline in one go or your next monthly patching cycle will address 30 issues in one shot, you can capture that succinctly.

The example below shows hypothetical remediation activity to address high and critical findings for ACME, Co. over the previous year and next 6 months.

The data required here includes assigned remediation work, the assessed severity of risks that drove that work, due dates, projected completion dates, and closure dates. Those are not trivial data, but they are core components of a mature ticketing system with significant value beyond the metric.

TIP: Report on remediation status rather than vulnerability counts to incorporate triage, risk rating, and aggregation of findings.

The stories told by this data show entering the period with a debt of unmitigated risks, and making immediate progress on overdue tasks while accumulating new findings through Q1. By the end of Q2, the story shifts to a steady accumulation of new work and many tasks beginning to go overdue. By the end of Q3, however, we see significant progress in closing out overdue and new issues together, and near the end of the year we show a small spike in issues from the implementation of a new tool or assessment of a recent acquisition.

Inspection of this visual drives several intelligent questions. Are first-line remediation staff able to keep up with the tide of findings generated by the second line? Are the due dates assigned to these tasks reasonable? How are they set? Is there consensus? Which groups and efforts have been performing this remediation work and how has it impacted other initiatives we've discussed? Discussion of this metric invites operations and IT in a non-accusatory manner and may well support point they are looking to make with governance around resourcing or slippage on other efforts.

With respect to sustainability, this metric has a good chance because the data underpinning it is valuable to so many parties. You can easily see how slices and drill-downs of this data are of immediate value to many departments across the organization. Having a wide range of support, if not dependence on the data will help ensure there are adequate resources devoted to the care and feeding of the data.

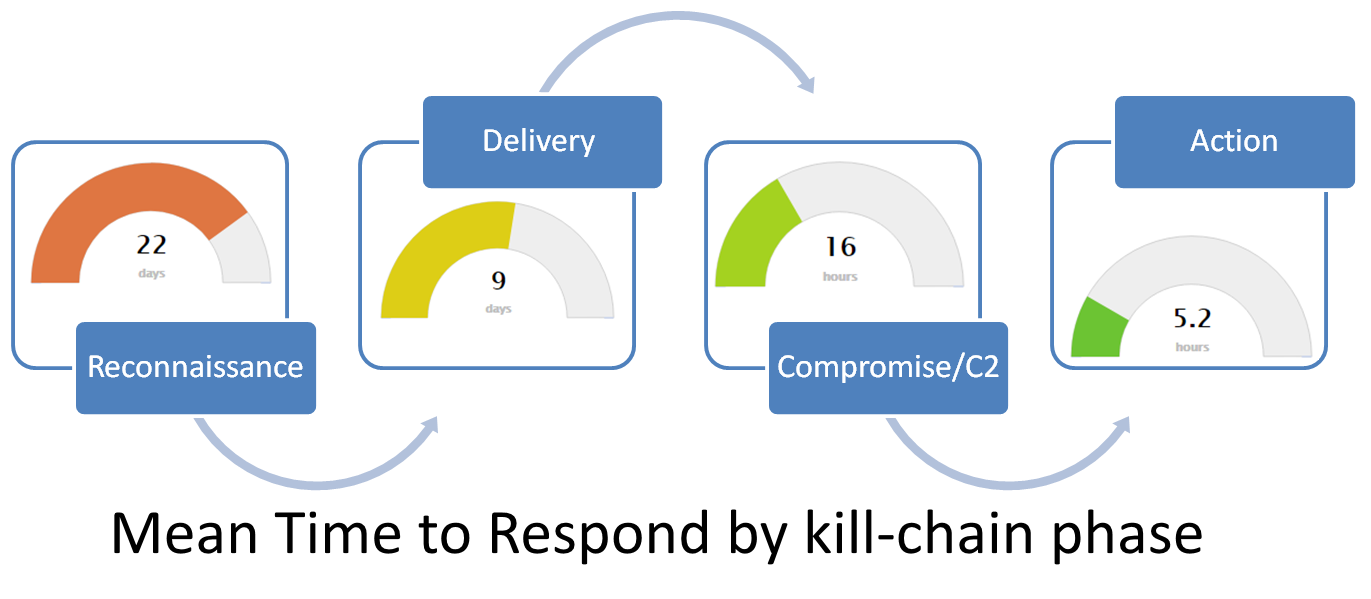

- Mean time to detect/respond/contain

The next sample captures some of the most elusive and important information possible - how well would the team respond if advanced adversaries attempted to materialize the Threat Objectives you are most concerned with today?

The data underpinning this underwhelming graph are the holy grail of security measurement, and they are correspondingly difficult to capture! Most firms will not have this data readily available, but the pursuit of them will drive significant benefit across the security program. First, you will want to target this measurement to a specific Threat Objective. Let's take sabotage, for example. Next, you need to break this across the kill chain, which requires mature threat intelligence capability. Not only do you need access to intelligence, but you need to tag the data to filter to sabotage-related events, and then break down analysis of previous attack techniques, tactics, and procedures (TTPs) so you can identify kill-chain phases. Next, you need a rich universe of data on those TTPs in your environment with the beginning, detection, and response times well-documented. Hopefully you don't have so many actual incursions that this problem is solved for you, which drives red teaming! The promise of this metric alone dictates why you should have consistent repeatable testing. While some of this data will require manual testing and documentation, others can be automated. If spearphishing is a common TTP for the delivery phase of your Threat Objective, automated phish tests will drive the data for your response time here. Being able to measure, communicate, and track your team's performance when responding to advanced threats is challenging, but you will likely identify many of your existing initiatives and aspirations in the tasks above that are required to generate the data.

The stories told and questions prompted by that small visual can be profound. The long time to respond to reconnaissance activity may show how an intruder can mine your file shares and intranet data without detection. Delivery can show security awareness issues and a lack of reporting during phish testing. Long lead times in responding to compromise or actions on objectives can hit very close to home. Instead of saying "we need endpoint detection on servers to detect malicious activity" (Directors yawn) you can say "if the attacker who shut down Sony got into our system, we would not notice for six months". That well-supported eye-opener is sure to get your message across.

When it comes to sustainability, supporting this level of data requires a commitment to automated and manual testing, which is likely on your wish-list already.

- People, places, and things

When you deliver data about assets - be they staff, locations, or devices - don't make the mistake of assuming the populations you are speaking about are well-documented or known. Use the opportunities to not only highlight the security posture of these assets, but to point out their very existence! A map of headcount intended to show weak security awareness in certain locations, for example, may produce some surprise that you have assets in those locations to begin with. Your security data may be the first time light was really shed on which assets you are carrying in which purposes or locations.

The data required to formulate a visualization like the map above becomes increasingly complex and challenging as you integrate the security story. Simply showing where staff are located, though, can be performed very easily with some simple HR data. Weaving in the security posture will take quite a bit more doing. Rating employee security level is difficult, but a mature approach will drive knock-on value far exceeding that of the metric or report. Phish test response is a good initial measure of security awareness. Generating data here requires consistent widespread testing across your global population and careful attention to both false positives and false negatives. Compliance with mandatory security training is another element that can be included in this type of scoring, and that data may be relatively easy to capture. Other items you can incorporate into scoring include positive or negative contributions to documented incidents (to reward good behavior in addition to identifying bad) and even password strength if you have provided education about pass phrases and can run an ongoing password cracking program.

TIP: Since phish tests vary wildly in sophistication, scale results by a “difficult factor” calculated via the percentage of recipients failing a test!

The security stories told and questions prompted by this data range from regional localization challenges in delivering education to increased susceptibility to social engineering in a recently outsourced call center. The non-security stories, though, may be the most interesting of all. Actually seeing how much raw headcount is in a certain area or how many applications are required to run a specific business unit may influence security-conscious decision making for years to come.

Sustainability of asset-based metrics can certainly be a challenge. Given the amount of effort IT departments are putting into configuration management databases (CMDBs), however, you will likely find a lot of help as you look to integrate and automate these processes.

Summary

Don't feel obligated to deliver 10 high-tech graphs every quarter if they just aren't passing your litmus test. Begin with your narrative overview statements, incorporate existing documentation from internal committees and workgroups by way of appendix, feel free to use examples instead of delivering exhaustive lists, and when you do get to quantitative metrics ensure they are valuable.